Home > Blogs & Events > Blogs

For several years, the field of quality checking tools has been largely stagnant, with incremental updates to established tools. Recently, TAUS’ Dynamic Quality Framework (DQF) and the EU’s Multidimensional Quality Metrics (MQM) have set the stage for new developments in quality assessment methods thanks to their new methods and push for standardization. In this blog, we’ll review three new market entrants that are hoping to shake up this area. But let’s start with an overview of the types of tools out there:

Both of these approaches serve their purpose and help both LSPs and their clients, but three companies are bringing energy to an area that has been something of a language technology backwater. In CSA Research’s briefings with the developers of these tools, we saw encouraging signs that quality assessment is taking off again.

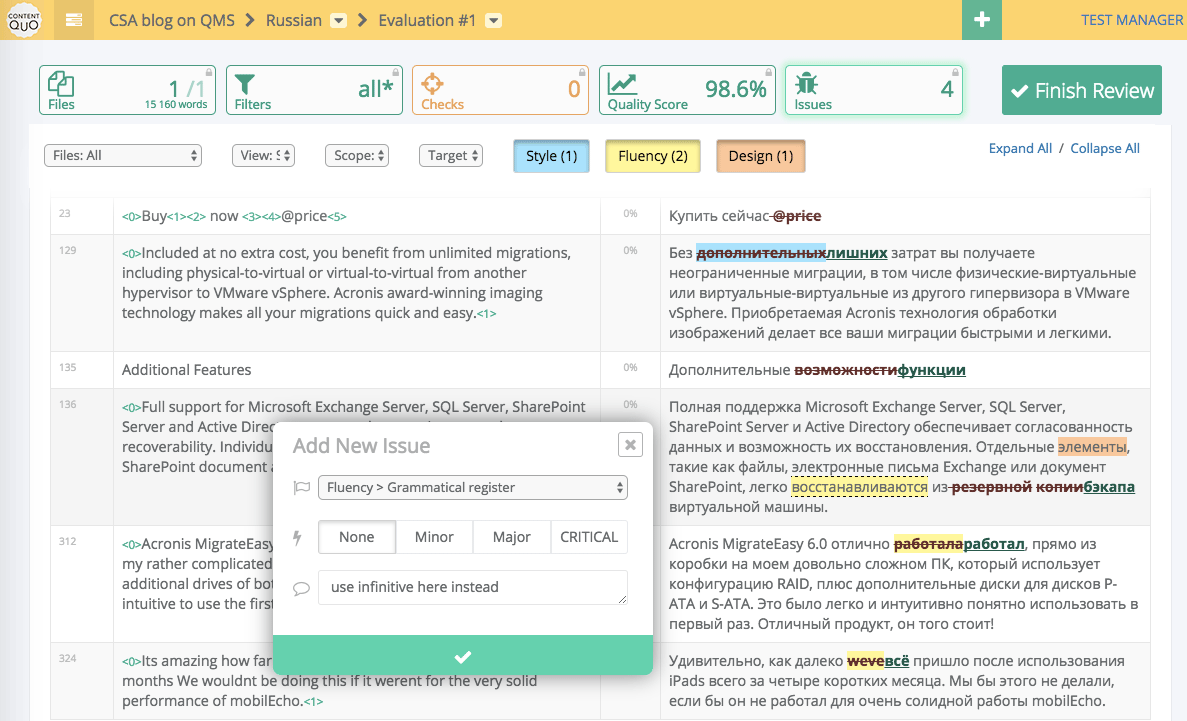

ContentQuo

The first tool we reviewed is ContentQuo – from an Estonian start-up of the same name – which is currently nearing market roll-out. We talked to Kirill Soloviev, the company’s product lead, about the approach taken by this application. Soloviev told us that he is impressed by the theories and frameworks behind MQM and TAUS DQF, but that the practical implementations have been lacking. His goal is to combine multiple quality methods into one platform, rather than develop a single approach, and to aim at quality management rather than just assessment. It therefore includes support for analytic error quality with MQM/DQF, manual translation review, management of quality inspections, automated scoring, change tracking, analytics and dashboards, in-browser editing for over 20 formats, and real-time collaboration.

Source: ContentQuo

The tool is entering private beta, but Soloviev is already planning to add additional modules for automatic checking and other quality models, as well as integration with several leading TMS tools and the TAUS DQF Dashboard. Among the tools we looked it, it stands out for its emphasis on “holistic” rather than just analytic quality: It focuses on the entirety of the text in use – including how it relates to function and appearance – not just on abstract errors that may or may not matter in a particular context. Soloviev emphasizes that the tool is trying to drive a shift to quality management rather than assessment. ContentQuo is aiming at mid-size translation buyers who want to increase their control over quality processes.

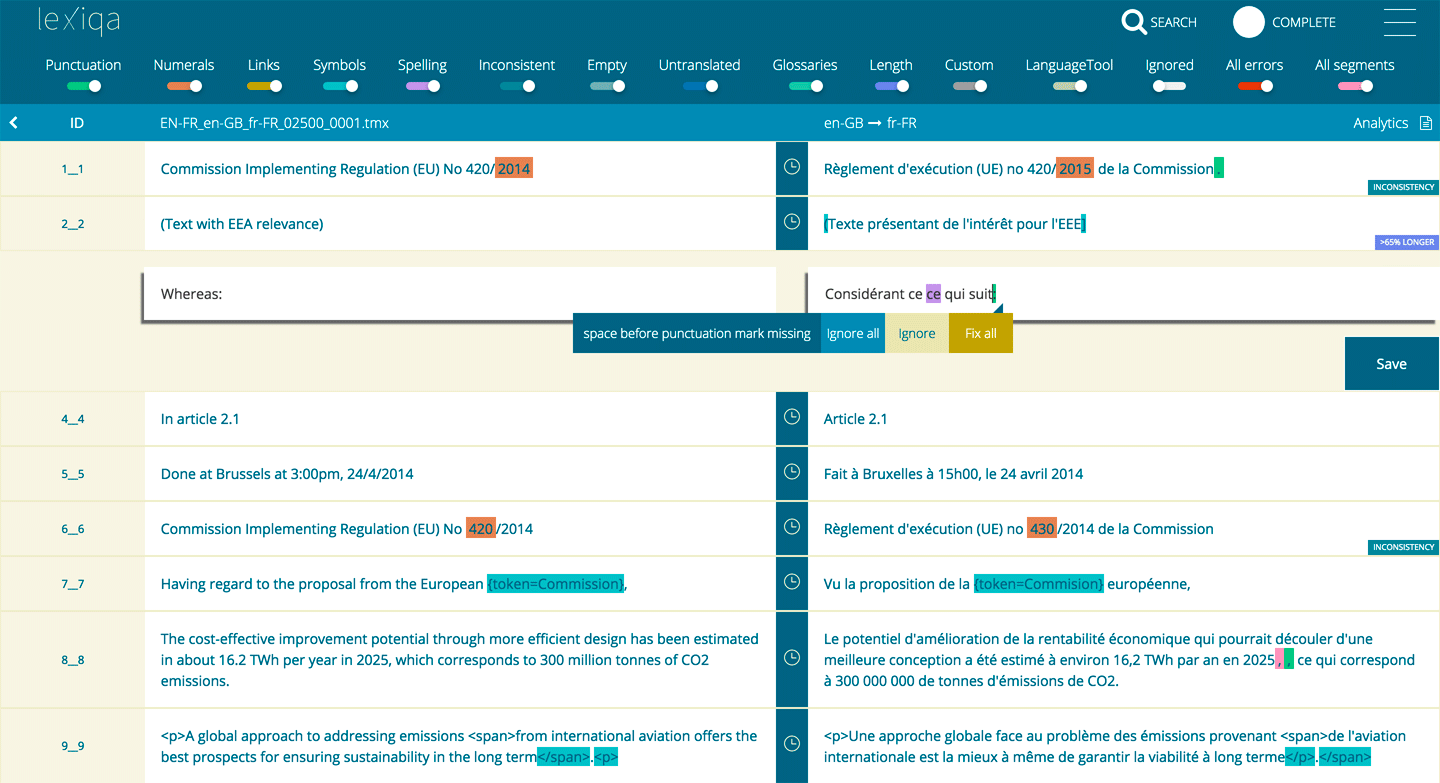

LexiQA

The second new entrant is lexiQA – from the company of the same name – which first appeared in 2016. COO Vassilis Korkas told us that its unique selling point is that it focuses on locale-specific development and knowledge to facilitate automatic error detection in more than 25 locales. Rather than treating quality assessment as a post-hoc process, lexiQA integrates into the translation process to provide real-time feedback to linguists while they are working. It has a cloud-based interface, but it also has a full API that allows it to integrate into other environments, such as smartCAT, Transifex and Memsource. In addition to its own capabilities, it uses LanguageTool for grammar checks and HunSpell for spelling. Its creators market the tool as an enterprise solution, with volume-based pricing, but several third parties have licensed it in their tools. For example, Translated currently offers lexiQA by default in MateCat's public instance.

Source: LexiQA

Korkas and the other two co-founders decided to develop lexiQA in part because they found existing solutions lacking: They were either too basic or had high false positive rates that drag down productivity. They therefore aimed to reduce the noise in QA processes with this tool and claim to have reached 5 to 10% false positives for their supported locales, versus a typical industry average of over 50%. Their attention to linguistic knowledge helps here and enables finding issues that general tools miss. For example, the tool understands written-out forms of numbers, so it could know that “Zwölf” in a German text could match “12” – in digits – in English. It also addresses locale-specific date, number, and currency formats.

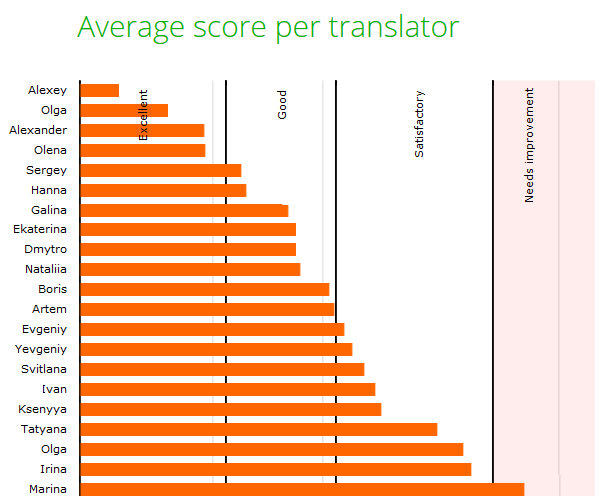

TQAuditor

Finally, we talked to Vladimir Kukharenko, CEO of Protemos, about TQAuditor, which was spun off from Ukrainian LSP Technolex. Launched last year after four years of development, TQAuditor allows enables translators to track corrections and view reviewer feedback for continuous learning and provides an anonymized mechanism to discuss and dispute changes. Reviewers categorize errors against a customizable model that defaults to a modified version of the LISA QA Model. In this respect, it resembles typical scorecard applications. Kukharenko told us that the tool’s target audience consists of mid-tier LSPs that need a quality assessment solution.

Source: Protemos

Where TQAuditor really stands out is in its deep dive into statistics. It tracks translator scores over time by vertical, project type, and other variables. It tracks individual reviewers and their performance. Protemos’ goal is to provide big data into how individuals perform so that LSPs can select the right people for each job. We were impressed by the amount of information it makes available to adopters, more than we have seen from other tools. However, sifting through the information to make decisions can be a challenge, and LSPs may struggle to decide what to do with it. Kukharenko told us that his company is working on implementing a full API and on integrations with various translation management systems (TMSes), which will help the solution reach its full potential.

Takeaway

These three tools all take different approaches, but they all share a goal to push quality upstream and to improve over tools that have evolved slowly over the years. Each brings something unique to the table – and they are thus complementary – but they compete for space a relatively small technology niche with entrenched players. Only time will tell if they will succeed, but the attention and capabilities they are adding to this field are welcome. As investment in quality tools heats up once again, their competitive pressure should help move the language industry past its long-time reactive model toward an active approach to quality management that includes content source optimization and smart augmentation of translators’ capabilities.

Learn how Automated Quality Estimation and Automated Post-Editing redefine translation efficiency and accuracy.

Explore The ReportAfter obtaining a BA in linguistics in 1997, I began working for the now-defunct Localization Industry Standards Association (LISA), where I headed up standards development and worked on quality assessment models. At the same time, I completed a...

Connect with Arle Lommel